ABSTRACT

A Count-Min Sketch is a space-efficient, probabilistic data structure that functions like a frequency table. While a Hash Map stores every key-value pair, the Count-Min Sketch uses a fixed-size 2D array to provide an over-estimate of an element’s frequency. It is the “big brother” of the Bloom Filter, trading exact counts for massive memory savings in high-volume data streams.

1. The Memory Wall: Netflix Scale

Imagine tracking the view counts of every episode on Netflix over 48 hours.

- The Hash Map Problem: Storing millions of unique episode IDs as keys and their 64-bit integer counts as values would consume gigabytes of RAM. As the lead engineer, your system would crash under the weight of this metadata.

- The Solution: Instead of storing the keys themselves, we use a Count-Min Sketch. It allows us to track frequencies using a constant amount of memory, regardless of how many unique episodes exist.

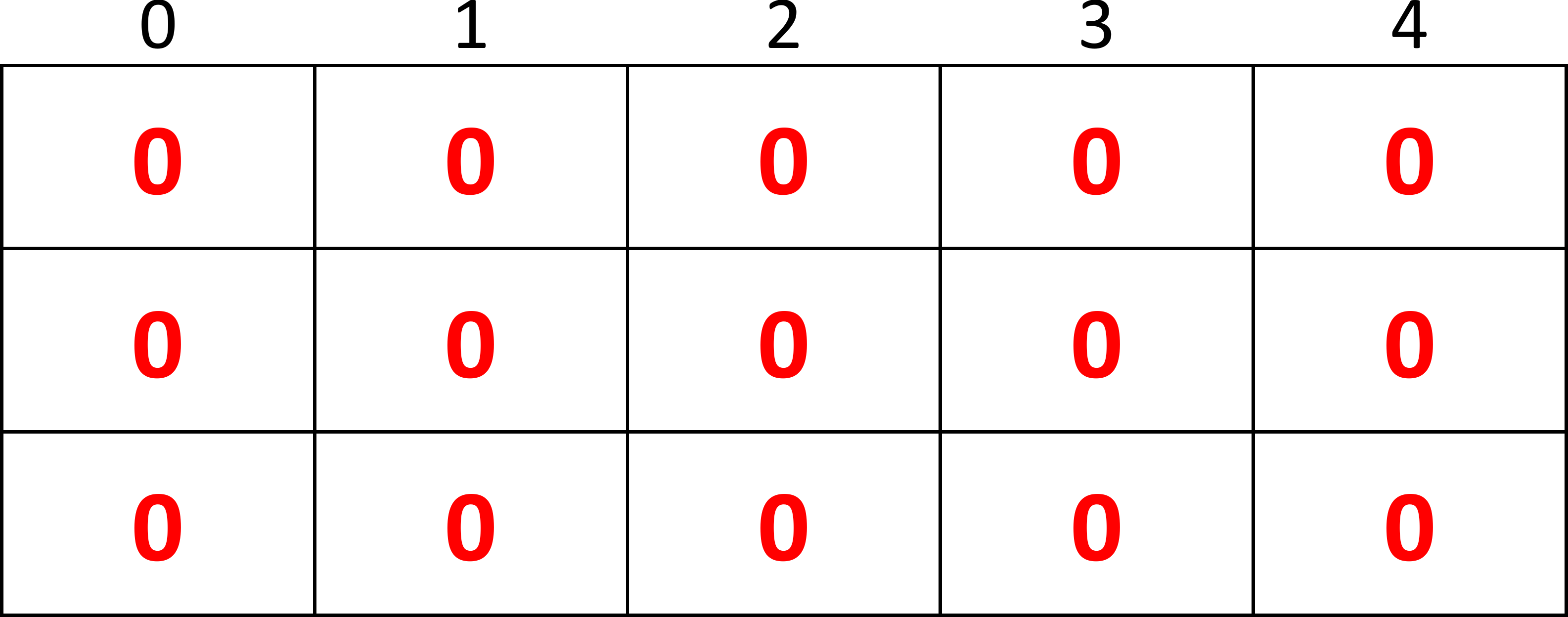

2. Structure and Mechanism

A Count-Min Sketch consists of a 2D array (matrix) with:

- rows: Each row corresponds to a unique hash function.

- columns: The range of each hash function.

Incrementing a Count

To record an event (e.g., a user watches an episode of Friends):

- Pass the episode ID through each of the hash functions.

- Each function gives you a column index for its specific row.

- Increment the counter in each of those cells.

Estimating a Count (The “Find” Operation)

Because different keys might hash to the same cells (collisions), the values in the cells can be higher than the actual count of a specific key.

- Retrieve the values from the hashed positions.

- The minimum of these values is your estimate.

Why the minimum?

Since collisions only ever increase the values in the cells, the smallest value among all rows is guaranteed to be the “cleanest” estimate. While this minimum can still be an over-estimate, the true count will never be greater than this value.

3. Mathematical Design

To minimize the error in our estimates, we design the dimensions of the sketch based on our tolerance for error () and our desired confidence level ():

- Width (Columns ): . More columns reduce the chance of collisions in any single row.

- Depth (Rows ): . More rows (hash functions) decrease the probability that every row will have a significant collision for a specific key.

4. Pseudocode

increment(x)

increment(x):

for i from 0 to k-1:

column = hash_functions[i](x) % m

matrix[i][column] += 1estimate(x)

estimate(x):

min_val = infinity

for i from 0 to k-1:

column = hash_functions[i](x) % m

current_val = matrix[i][column]

if current_val < min_val:

min_val = current_val

return min_val5. Summary Comparison

| Feature | Hash Map | Count-Min Sketch |

|---|---|---|

| Accuracy | 100% Precise | Probabilistic (Over-estimates) |

| Memory | — Grows with unique keys | — Fixed size |

| Keys Stored | Yes | No |

| Best Use Case | Small/Medium datasets | Heavy-hitter detection in massive streams |