ABSTRACT

The fundamental difference between algorithm types lies in their consistency and reliability. While Deterministic algorithms offer a predictable path, Randomized algorithms leverage probability to optimize for speed or simplicity, trading off either consistent runtime (Las Vegas) or guaranteed correctness (Monte Carlo).

1. Deterministic Algorithms

A deterministic algorithm is a “pure function” of its input.

- Consistency: Given input , it will always follow the same execution path and produce the same output .

- Predictability: The runtime is fixed for any specific input.

- Examples: Selection Sort, Merge Sort, and Binary Search.

2. Randomized Algorithms

These algorithms accept a second hidden input: a source of random bits. This randomness allows the algorithm to make “choices” during execution.

A. Las Vegas Algorithms

“Always correct, sometimes slow.”

A Las Vegas algorithm uses randomness to avoid “bad” cases. It will never give you the wrong answer, but you cannot guarantee exactly how long it will take.

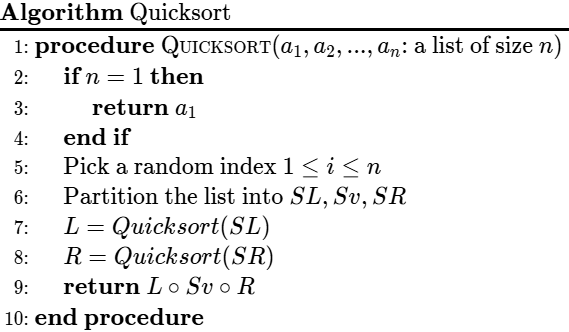

Quicksort (Randomized)

Quicksort is the quintessential Las Vegas algorithm. By picking a random pivot, we ensure that no specific input (like an already sorted list) can consistently trigger the worst-case.

- Logic:

- Pick a random element as a Pivot.

- Partition the array: elements pivot to the left, elements pivot to the right.

- Recurse on both halves.

- Runtime Complexity:

- Best/Average Case: — Occurs when the pivot splits the list roughly in half.

- Worst Case: — Occurs if the pivot is always the extreme (min/max), but the probability of this happening with random pivots is nearly zero for large .

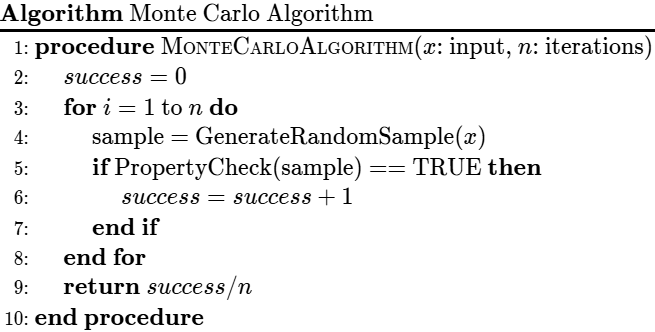

B. Monte Carlo Algorithms

“Always fast, sometimes wrong.”

A Monte Carlo algorithm has a fixed execution time, but there is a small, calculable probability that the output is incorrect. We usually “boost” the correctness by running the algorithm multiple times.

- Logic: It samples the search space. The more time you give it (more iterations), the higher the probability of success.

- Example: Primality Testing (Miller-Rabin). It can tell you if a number is prime very quickly, but there is a tiny chance it labels a composite number as prime.

- Example: Estimating by randomly dropping needles on a grid.

3. Comparison Summary

| Feature | Deterministic | Las Vegas (Randomized) | Monte Carlo (Randomized) |

|---|---|---|---|

| Output | Always Correct | Always Correct | Probability of Error |

| Runtime | Fixed for input | Variable (Random) | Fixed/Deterministic |

| Primary Goal | Reliability | Avoid Worst-Case Scenarios | Speed in massive search spaces |

| Example | Merge Sort | Randomized Quicksort | Miller-Rabin Primality Test |

4. Why Use Randomness?

- Symmetry Breaking: In distributed systems, randomness helps multiple computers decide who goes first without a central leader.

- Defeating Adversaries: If an attacker knows your deterministic pivot-picking strategy, they can give you an input that makes your server crash (). Randomization makes your “weakness” impossible to predict.

- Simplicity: Often, a randomized algorithm is much shorter and easier to implement than a complex deterministic one that achieves the same average runtime.