INFO

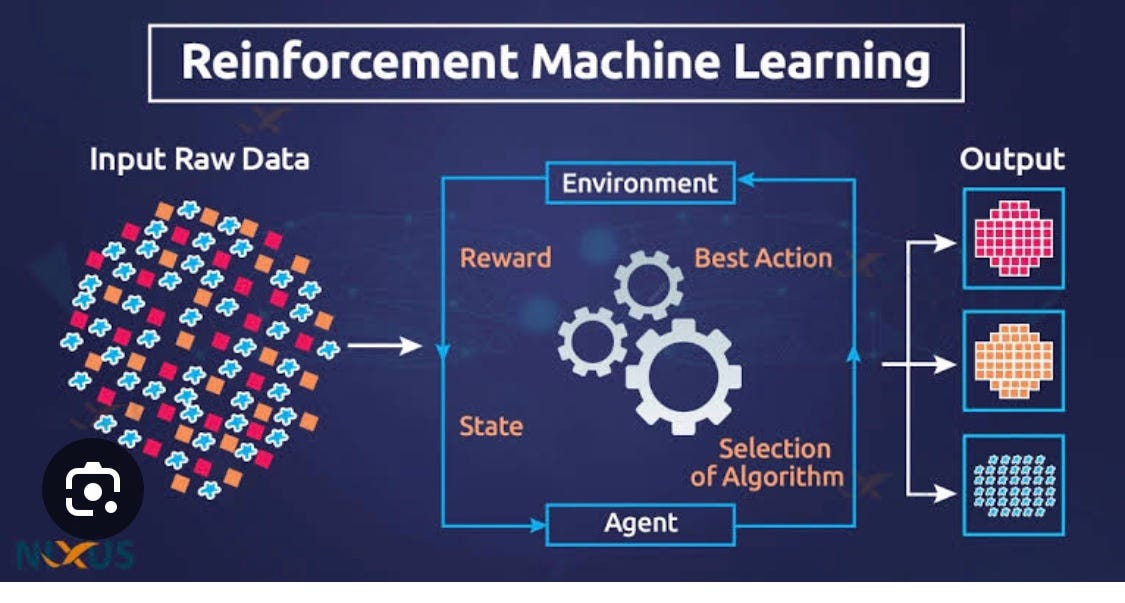

This section covers deep reinforcement learning (DRL) algorithms that combine neural networks with reinforcement learning principles to solve complex decision-making tasks in high-dimensional environments.

Overview

Reinforced Deep Learning Models leverage deep architectures to approximate policies, value functions, or both. These models are especially effective in environments with:

- Continuous or discrete action spaces

- Sparse or delayed rewards

- High-dimensional state representations

They are commonly used in robotics, game AI, autonomous systems, and industrial control.

Included Models

A value-based method that uses a deep neural network to approximate the Q-function. Introduced experience replay and target networks for stability.

An off-policy actor-critic algorithm for continuous action spaces. Combines deterministic policy gradients with Q-learning.

A policy optimization algorithm that balances exploration and stability using clipped surrogate objectives.

Key Concepts

- Policy Networks: Learn to map states to actions

- Value Networks: Estimate expected future rewards

- Exploration Strategies: Balance between trying new actions and exploiting known ones

- Replay Buffers: Store past experiences for off-policy learning

- Target Networks: Stabilize training by decoupling updates

- Gradient-Based Optimization: Used to update neural parameters

Suggested Links

- Reinforcement Learning ← Broader RL context

- Supervised Deep Learning ← For hybrid comparisons

- Hybrid Deep Learning Models ← For models combining DRL with other paradigms

Use Cases

- Autonomous Navigation

- Robotic Manipulation

- Game Playing Agents

- Industrial Control Systems

- Financial Portfolio Optimization